![]() We had designed and implemented an autonomous helicopter flight system as a senior design project in 2007. This system allows the RC helicopter to be flown without a pilot.

We had designed and implemented an autonomous helicopter flight system as a senior design project in 2007. This system allows the RC helicopter to be flown without a pilot.

The room appears to be darker than it actually was on the video because I set the exposure of the camcorder lower so that the screen is readable. But yes, we did dim out the room to increase the reliability of the image processor. I knew the image processor works with the lights on, but the reliability decreases for sure. With our $1,000 budget, we could not afford even the small possibility of failure!

Since we were still adjusting the parameters of the controller, the helicopter looks a little unstable, and this could have been improved. Other than the shakiness, the helicopter flew pretty accurately. In other words, the helicopter flew accurately but not very precisely in the video.

This flight was taped just a couple of hours before our E-Week (an annual engineering event at UNL) presentation. But thanks to the bomb threat, the event was cancelled!

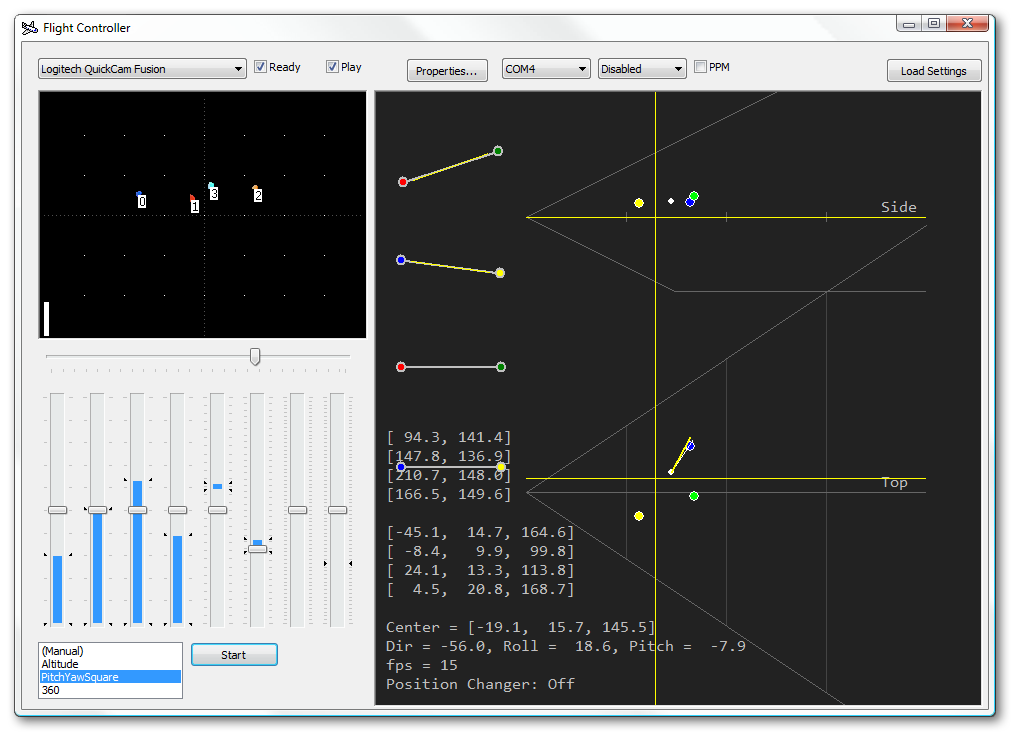

Screenshot of the helicopter controller in the development phase. It shows some internal data, processed image, and the position/attitude of the helicopter. The software is capable of reading the sensor values, but it is disabled in the flight. Since the software is developed and used by myself in this phase, the user interface is not designed for general operators. Instead, it is designed such that I can monitor the system at a glance by reading the plotted information and the values of the internal matrices. Furthermore, the interface allows me to make quick adjustments when needed. Other settings such as controller parameters are in the configuration files.

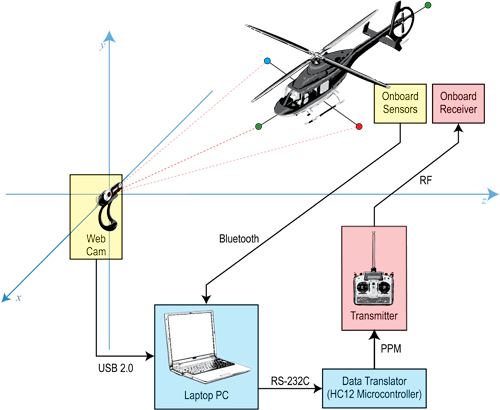

The position of the helicopter is detected by a single webcam and nothing else. My group members successfully developed an onboard sensor system using a sonar, gyroscope, accelerometer, and magnetometer as a part of our backup plan. The onboard system communicates with my laptop via Bluetooth. Fortunately (at least for me), my image processor worked exceptionally well by itself. So the onboard system was not used for the calculation during the flight even though it was fully functional.

The steps of the image processing are as follows:

By the way, I came up with this logic from scratch while I was walking, talking, and sleeping. I think the procedure can be derived with the sophomore-level knowledge and a good whilteboard on your door. Many people ask for the reference documents about it, but no such thing exist:) For more details, please take a look at our report (PDF).

The image from the webcam is processed by the laptop computer, and the computer controls the helicopter via the microcontroller. Onboard sensors were implemented and worked well, but they were not used by the controllers since the image processor was reliable.

Paying attention to the safety was particularly important for this project. Helicopters can kill people with the main rotor. There was always a good human pilot monitoring the flight throughout the testing, so he could take control immediately if anything goes wrong with the system. The helicopter was maintained very carefully to minimize the risk of mechanical failures. We are proud of the fact that there had been no major crashes in the project.

Copyright © 1997-2008 Yutaka Tsutano.